Practical Ways Businesses Can Improve Server Performance and Uptime

Every business depends on its servers, even if people rarely think about them. When servers slow down or go offline, work stops, customers leave, and revenue can drop quickly. Many outages happen not because of major failures but because small issues go unnoticed for too long.

The good news is that improving server performance and keeping systems online does not always require huge investments. With the right steps, businesses can build stronger, more reliable systems. In this guide, we will look at practical ways to improve server performance, reduce downtime, and keep your digital operations running smoothly every day.

Start by Building an Honest Infrastructure Foundation

Every reliability effort worth pursuing begins with a clear-eyed look at what you’re actually running and what your infrastructure truly demands. Without that, expensive upgrades become expensive guesses.

Match Your Server Capacity to Real Business Demand

Before touching a single configuration file, map your workloads web applications, databases, containers, VMs to actual business priorities. Establish CPU utilization baselines, RAM consumption trends, storage IOPS, and network bandwidth benchmarks. Then create a prioritized “critical workloads” list tied to concrete performance targets: API p95 latency under 200ms, page loads under three seconds. Specificity matters here.

If you want hands-on infrastructure support backed by over two decades of managed hosting experience, ColocationPLUS delivers colocation and server management services with physical redundancy built in, 99.99% network uptime guarantees, and expert support designed for both SMBs and enterprise teams.

Choose a Hosting Architecture That Fits Your Reliability Goals

On-premises, cloud VMs, hybrid setups, and colocation each carry distinct trade-offs. For consistently heavy workloads, strict compliance requirements, or superior physical redundancy, colocation frequently delivers the most predictable performance and simplifies business server management far more than rolling your own data-center solution ever will.

With your infrastructure foundation properly aligned, shift from planning to action.

Quick Wins That Can Meaningfully Improve Server Uptime Within Days

Not every reliability improvement demands a six-month project. A few well-placed fixes can shift your stability picture significantly, and faster than you’d expect.

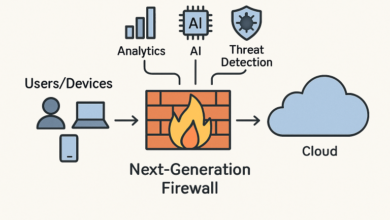

Hunt Down and Eliminate Single Points of Failure

Single points of failure hide in places you’d expect and plenty you wouldn’t have a solitary database node, one ISP connection, a single DNS provider, a server drawing from one power feed.

The fixes aren’t always complicated: add secondary DNS, configure active-passive database failover, deploy standby VMs, use dual power supplies. Run a SPOF audit across every critical service. Ask yourself what actually happens if each piece fails tonight.

Automate Patch Management Without Burning Down Production

Outdated software is a direct, documented path to unplanned outages. A sound patching process requires a staging environment, scheduled maintenance windows, pre-patch snapshots, and documented rollback procedures.

Treat OS patches, firmware updates, hypervisor patches, and application updates as separate workflows with separate owners. A monthly accountability matrix stops things from slipping through the cracks.

Core Tactics for Everyday Server Performance Optimization

You’ve closed the obvious gaps. Now comes the deeper, proactive work that keeps systems running smoothly on an ongoing basis not just during incident reviews.

Right-Size Resources and Tune Storage for Consistent Output

Oversized VMs waste money. Undersized ones cause chronic CPU saturation and memory pressure. Use hypervisor reservations and limits to prevent noisy-neighbor problems before they surface. Performance baselines help you decide whether to scale vertically or horizontally and that distinction has real cost implications.

Storage and database performance are where the most dramatic gains often hide. Prioritize SSD or NVMe storage for critical workloads, maintain separate volumes for OS, logs, and data, and run monthly database health checks: review slow queries, verify indexes, confirm connection pooling, and check whether backup jobs are colliding with business-hours traffic.

Advanced Monitoring and Resilience Design

Strong visibility and thoughtful architecture don’t just help you recover from failures they help you survive them without anyone noticing.

Build Monitoring Around Business Outcomes, Not Just CPU Graphs

Tie alerts to what the business actually cares about: checkout success rates, login failures, transaction latency. Raw CPU percentages alone don’t tell you whether customers are suffering. Define incident tiers critical, major, minor with corresponding response SLAs, and build runbooks for your five most common alert types. Fewer signals, better signals. That’s the goal.

Design for High Availability and Actually Test Your Resilience

Active-active web tiers behind load balancers, database replication, and redundant hypervisors provide a solid HA baseline without requiring enterprise-scale spending.

But architecture alone isn’t enough to run quarterly resilience drills. Simulate a link failure. Test database failover. Restore from backup. Validate whether your RTO and RPO targets actually match what the business can afford to lose.

Running Infrastructure That Holds Up

Server performance optimization and the sustained work to improve server uptime are not one-time projects. They’re operational disciplines that compound over time. Start with real visibility, remove single points of failure, tune your resources, and add resilience in deliberate layers.

The businesses that treat reliability as a continuous practice not a fire-drill exercise consistently outperform those that only react when something breaks. Your infrastructure deserves that same ongoing attention you give your product roadmap.

Read also: Intelligent Technology Trends Transforming Modern Business Innovation

Frequently Asked Questions

Which metrics should business leaders prioritize?

Focus on uptime percentage, revenue-impacting incidents, average transaction latency, and post-outage recovery time. These four connect infrastructure health to business outcomes without requiring deep technical fluency.

How can small businesses improve uptime without a full IT department?

Managed monitoring tools, automated backups, lightweight scripting for routine tasks, and a colocation or MSP partnership give small teams enterprise-grade reliability without building a large internal staff.

Why does my website go down when the server looks fine?

DNS failures, upstream provider outages, SSL misconfigurations, application-level bugs, and third-party dependency failures can all cause user-facing downtime even when your own CPU and memory metrics appear completely normal.

What uptime targets are realistic for SMBs?

99.9% allows roughly 8.7 hours of downtime annually and is achievable with modest redundancy. Reaching 99.95% or 99.99% requires more sophisticated architecture and investment matches the target to what downtime genuinely costs your specific business.

How often should load tests run?

Quarterly at minimum, and always ahead of major releases or planned marketing campaigns. SaaS and ecommerce teams benefit from monthly lightweight tests to catch performance regressions before they hit production traffic.